The quiet layer between your agents and every approved model.

PipeLLM gives teams one governed surface for model routing, runtime controls, managed tools, and audit review without forcing a new SDK or a louder visual story than the product deserves.

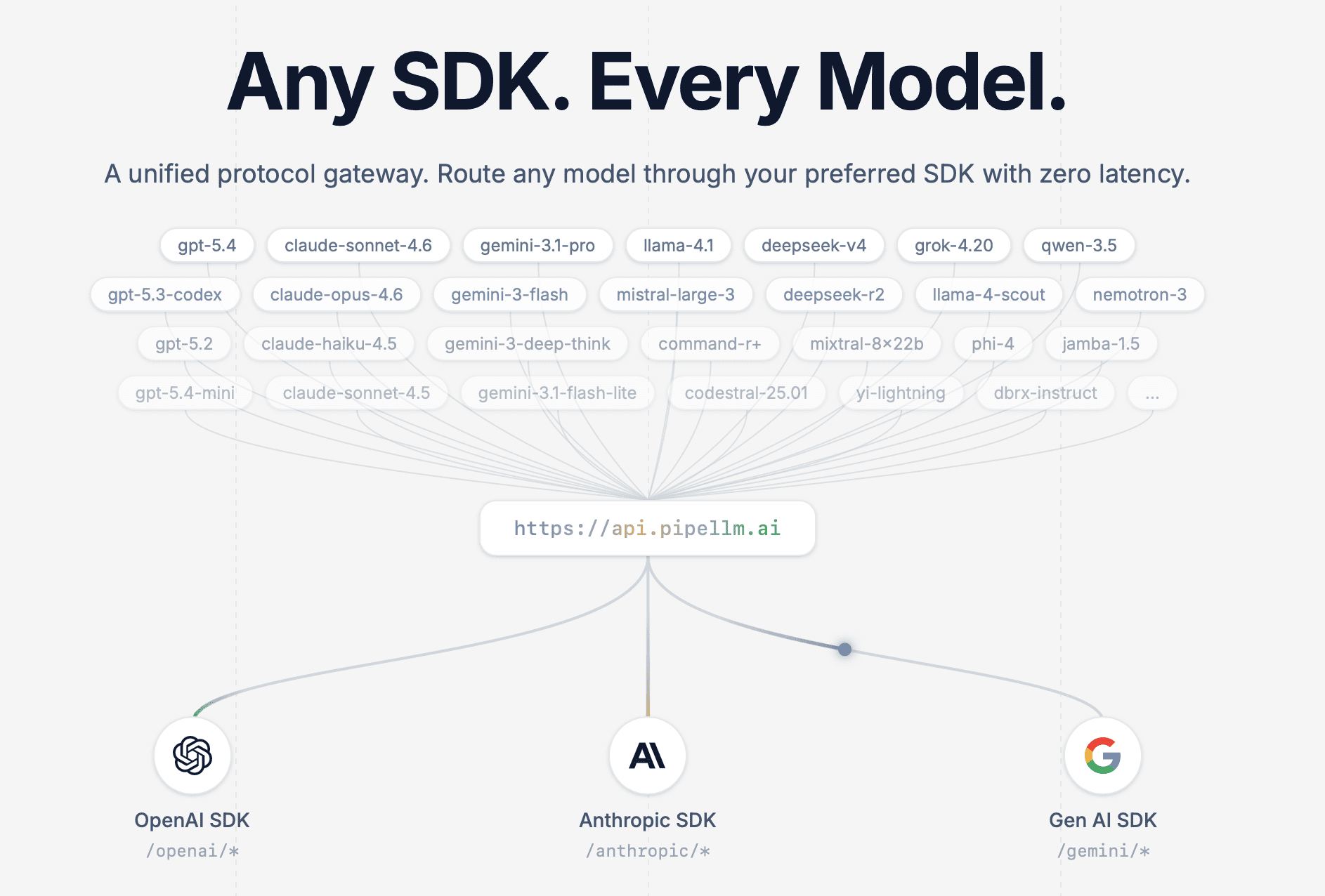

Unified endpoint

One base URL. Multiple protocols. Governed execution.

https://api.pipellm.ai

/openai/*

OpenAI-compatible

Keep existing clients while PipeLLM governs routing and model access.

/anthropic/*

Anthropic-compatible

Preserve existing tool and message flows with one gateway policy layer.

/gemini/*

Gen AI-compatible

Route approved Gemini-family traffic through the same operational surface.

Model gateway

Protocol translation and provider abstraction with an intentionally quiet interface.

Governance

Approvals, cost guardrails, and audit trails for production teams.

Runtime layers

Managed tools and runtime surfaces that stay underneath the stack teams already use.

Keep the SDK. Replace the layer underneath it.

PipeLLM lets teams keep familiar clients while moving execution onto a governed gateway with protocol translation, approved provider access, and runtime-aware controls.

View integrationsSDK-compatible request

Same client ergonomics. Controlled execution path.

One runtime layer for production agents.

Managed tools, policy controls, approval trails, and operator visibility without turning the page into a wall of dashboard copy.

View the platform docsRuntime sessions

Stateful runs with visible context and operator handoff.

Policy routing

Approved models, budgets, and permissions applied before execution.

Production reliability

Fallback and review state without cluttering the product surface.

Operating sequence

How PipeLLM governs an agent stack.

A compact operating rail instead of another oversized explanation block.

Session starts

User, workspace, model, and run state open under one runtime session.

Policies apply

Allowed models, budget rules, and action permissions are checked first.

Tools execute

Managed services like WebSearch run under the same traceable control layer.

Operators review

Approval trails, bad runs, and production follow-up stay visible afterward.

Review agent runs like a trace debugger, not a generic audit log.

PipeLLM Runtime Audit captures model hops, tool calls, approval pauses, and final outcome labels in one operator surface so teams can understand exactly why an agent behaved the way it did.

Run steps

Three execution events leading into the approval gate.

Step 1

Planner node

Plans the tool and response path.

Step 2

WebSearch tool

Managed tool call under workspace controls.

Step 3

Approval gate

Pauses a sensitive action for human review.

Selected node

Approval gate: refund.create

Policy class

payments.refund.requires_human_review

Triggered by

tool_call: refund.create

Reviewer

ops@pipellm.ai

Wait time

26s

Workspace

production

Run cost

$0.018

Request context

Decision

Decision

Approved

Approved by

ops@pipellm.ai

Queue

refund-risk-review

Reason

Matches existing refund policy and customer entitlement.

Execution path

resume after approvalOutcome

Final answer, reviewer verdict, and follow-up queue stay attached to this trace.

Node-level trace

Inspect model steps, tool calls, retries, and route changes without leaving the run view.

Approval review

See which policy paused the run, who approved it, and exactly where execution resumed.

Outcome labels

Attach human verdicts, bad-run labels, and follow-up queues directly to the trace.

Keep the section focused on one primary product surface, then use the cards below it to explain trace, approval, and outcome review without overcrowding the main panel.

View platform docsPipeLLM WebSearch

Equip your AI agents with real-time web context via a single unified API.

Agent action

research: summarize AWS agent announcements this week

PipeLLM route

https://api.pipellm.ai/v1/websearch/search?q=aws+agent...

Vanilla LLM

"I don't have real-time data for this week's AWS announcements. My knowledge cutoff is 2023."

PipeLLM gateway

[{

"title": "AWS updates Bedrock Agents...",

"url": "https://aws.amazon.com/...",

"content": "Memory retention and dynamic routing..."

}]

Grounded agent

This week, AWS announced major Bedrock Agent updates:

- Memory: Retention across sessions.

- Routing: Dynamic model routing.

Deep Search·Live web research.

Simple Search·Lightweight lookup.

Reader·Page extraction.

News Search·Live launch tracking.

Notes from the engineering surface behind PipeLLM.

Essays, product notes, and technical guidance for teams building with multi-model AI systems.

Bring your agent stack into production without making it louder.

Start with managed runtime and tool access, then layer in governance, approvals, and observability as your AI surface becomes real infrastructure.