Agent Memory Is the Next Bottleneck in AI Applications

The AI industry is racing to expand context windows — from 4K to 1M tokens. But a fundamental contradiction remains: no matter how large the window, Transformers' U-shaped attention distribution still causes middle content to be ignored, and stacking more tokens only makes inference slower and more expensive. The direction itself needs rethinking.

The real solution isn't bigger context windows — it's better memory systems.

Context Windows Are Sticky Notes, Not Knowledge Bases

Think of context windows as temporary scratch pads. They hold the current conversation, but they don't understand the information they contain. Making the sticky note bigger doesn't solve the problem of remembering what happened yesterday, or knowing which past decisions matter for today's task.

A proper memory system is a structured knowledge base — one that can selectively recall the right information at the right time, rather than dumping everything into a single prompt.

RAG Is Not Memory

A common misconception: RAG (Retrieval-Augmented Generation) and memory serve the same purpose. They don't.

- RAG answers "what do I know?" — it's an encyclopedia. It retrieves factual knowledge from a corpus.

- Memory answers "what happened with whom?" — it's a personal diary. It tracks interactions, preferences, and context history.

They're complementary, not interchangeable. An AI coding assistant needs RAG to understand framework documentation, but it needs memory to remember your project's coding conventions and past debugging sessions.

The Two Dimensions of Agent Memory

A well-designed memory architecture needs to handle two orthogonal dimensions simultaneously:

Scope: Private vs. Shared. Some memories belong to a single user session ("this user prefers concise answers"), while others should be shared across an organization ("our API rate limit is 100 RPM").

Duration: Short-term vs. Long-term. Short-term memories (current task context) frequently accessed get automatically promoted to long-term storage. Memories that stop being accessed gradually decay — following the Ebbinghaus forgetting curve principle.

Three Scenarios That Benefit Most

1. AI-Powered Coding

Coding agents that remember project-specific conventions, architectural decisions, and past debugging outcomes can be dramatically more effective. Instead of re-explaining your stack every session, the agent builds a progressively deeper understanding of your codebase.

2. Personalized Interactions

Customer-facing agents can build dynamic user profiles — remembering preferences, past issues, and communication styles. This turns generic chatbots into genuinely personalized assistants.

3. Multi-Agent Collaboration

In multi-agent systems, shared memory enables collective learning: one agent's mistake becomes every agent's lesson. This is especially powerful for teams deploying multiple specialized agents that need to coordinate without redundant discovery.

From "More Context" to "Smarter Recall"

The paradigm shift is clear: the future isn't about remembering everything — it's about recalling the right information at the right time. This requires:

- Semantic retrieval: Finding related content by meaning, not just keywords

- Relational linking: Connecting "User A modified File B" with "File B depends on Module C"

- Temporal awareness: Understanding what happened recently vs. what happened last week

- Automatic deduplication: Preventing memory bloat from repeated observations

Practical Implications for AI Infrastructure

If you're building production AI systems, the memory question intersects directly with your model strategy:

- Model selection becomes memory-aware. Use a large-context model (Gemini 2.5 Pro at 1M tokens) for deep analysis tasks, and a smaller, cheaper model for quick tool calls. A unified gateway makes this switching effortless.

- Cost optimization follows memory optimization. Better memory means less context sent per request, which directly reduces token costs. A 50% context reduction translates roughly to 50% savings on input token billing.

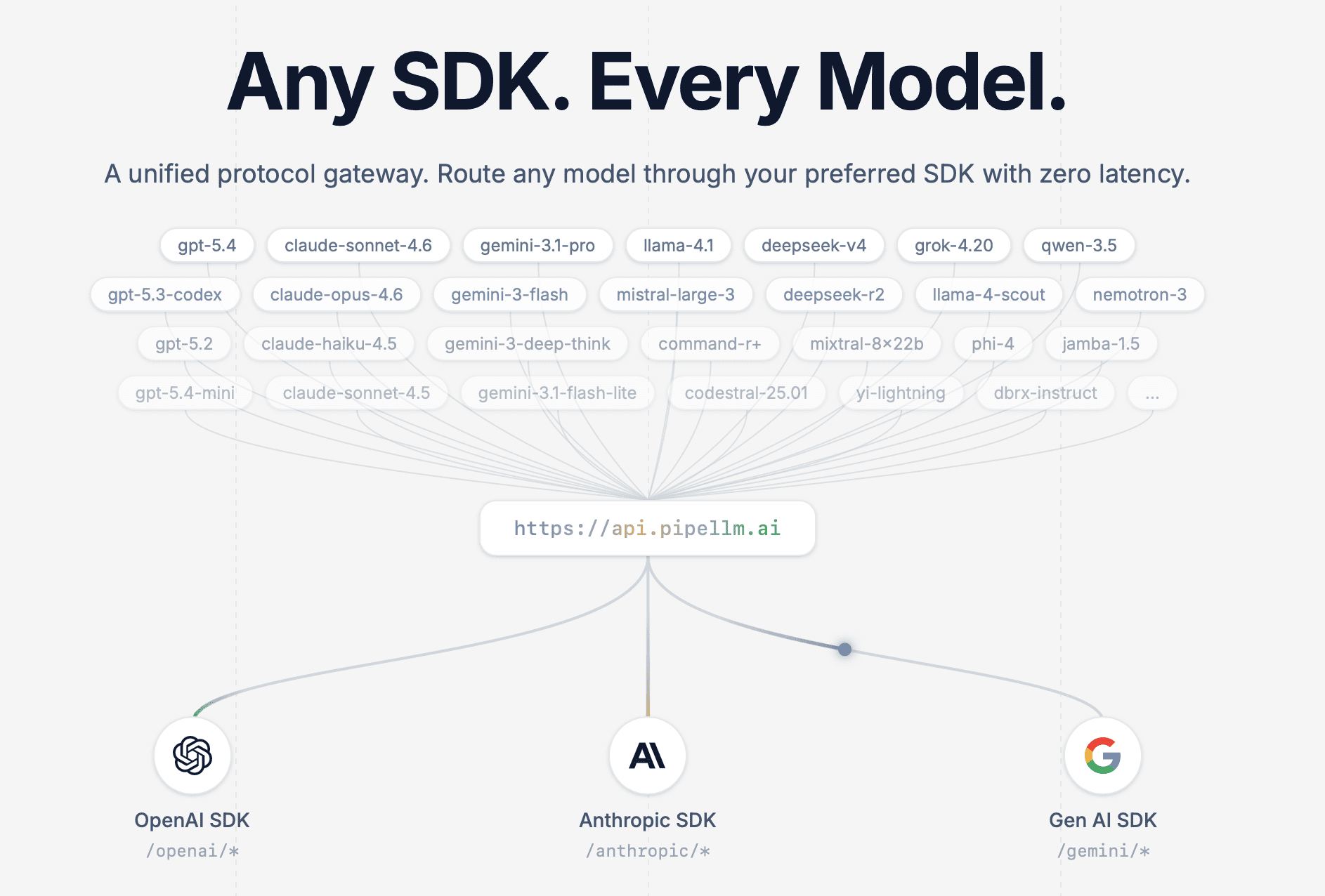

- Provider diversity becomes strategic. Different providers excel at different context sizes and task types. With a platform like PipeLLM, you can route memory-intensive tasks to the optimal model without changing your application code.

The Bottom Line

Agent memory is the bridge between AI that follows instructions and AI that truly understands your work. The transition from "stacking context" to "smart recall" isn't a nice-to-have — it's the architectural foundation that will separate useful AI agents from expensive token burners.

For AI to become truly intelligent, it needs to have a good memory first.

PipeLLM helps you navigate model complexity with a single API endpoint for every major AI provider. Whether you're optimizing for context window size, cost, or speed — route any model through any SDK, zero friction.